I posted that on X back in January of 2024.

I believed it then. I believe it now. But I’m starting to wonder if the rest of the internet got the memo, because things have gotten significantly worse.

I use Firefox. I’ve written about this before. I use it because it’s open source, because Mozilla isn’t Google, and because Google already has enough of my data without handing them my entire browsing history on top of it. I even wrote a Firefox extension to fix a hotkey change that Mozilla made in Firefox 88 that messed up my workflow. I’m committed to this browser. I don’t want to leave.

But the web is making it really, really hard to stay.

The Numbers Are Brutal

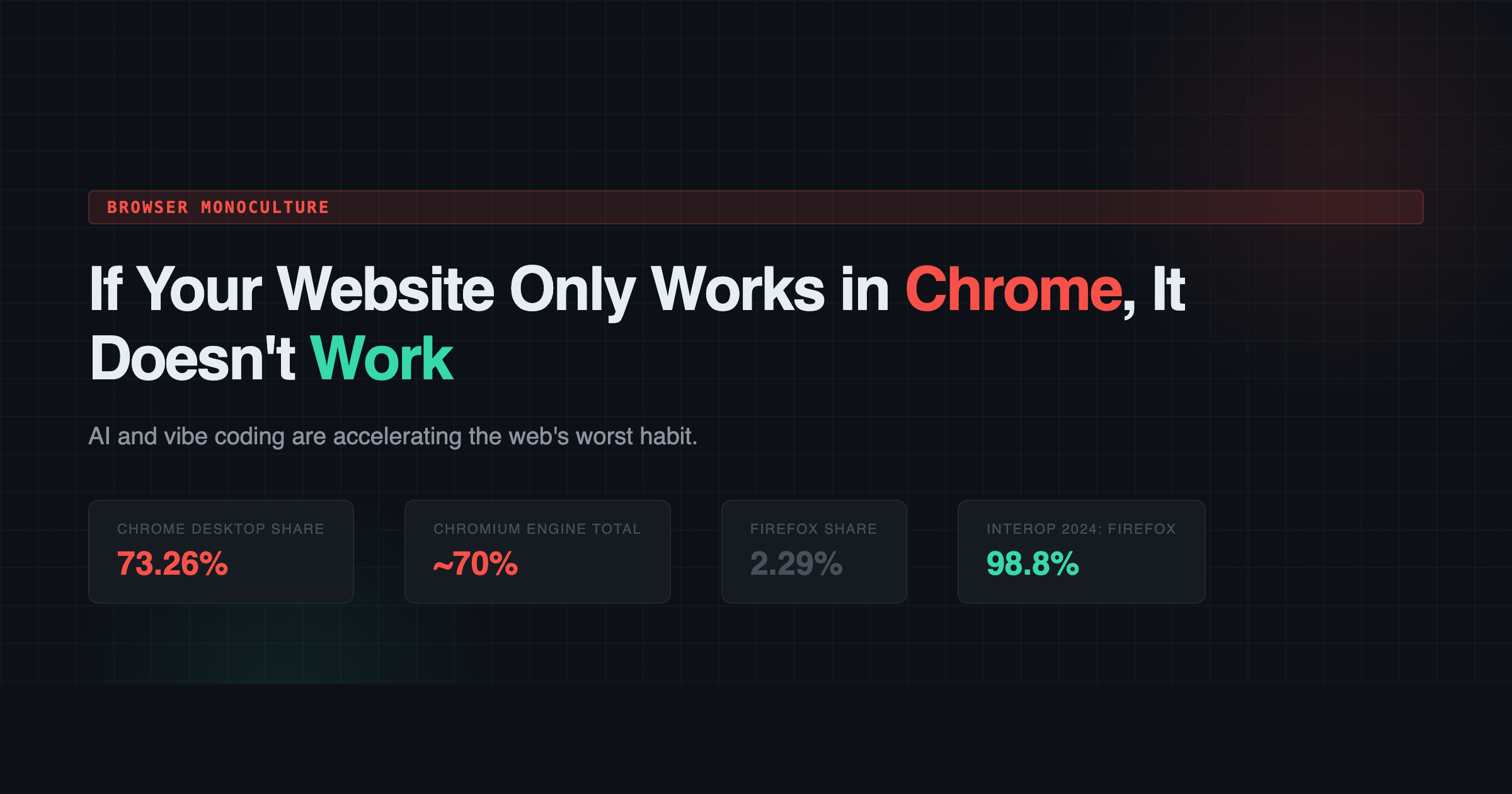

Let’s just look at where we are. According to StatCounter, Chrome’s global market share was 65.87% in 2022. By 2025, it climbed to 68.35%. On desktop specifically, it’s sitting at 73.26% as of February 2026. Firefox? It went from 3.04% in 2022 to 2.37% in 2025 to 2.29% now. That’s not a decline. That’s a slow death.

And here’s the thing that makes it even worse: Chrome isn’t the only browser running on Google’s engine. Edge, Brave, Opera, Vivaldi, Arc… they all run on Chromium. When you add them all up, roughly 70% of all browsers on the planet are running Google’s rendering engine. Firefox and its Gecko engine are basically the last ones standing that aren’t either Chromium or Apple’s WebKit.

We have been here before. This is IE6 all over again. Except this time the dominant browser is actually good, which makes the problem harder to see and even harder to fight.

Developers Don’t Test Anymore

Here’s where it gets personal. I browse the web every single day in Firefox and I run into broken websites constantly. Not “oh this font looks a little different” broken. I mean login forms that won’t submit. Payment flows that hang. Entire web apps that just show a blank white page. Dropdown menus that don’t open. Modals that trap your focus and never let go.

Mozilla’s own community forums are full of people reporting the same thing. Users on Mozilla Connect describe websites that load in seconds on Chrome but take over a minute in Firefox. E-commerce sites where the payment button literally does not work unless you switch browsers. I can’t even pay my internet bill in Firefox. I’m not kidding.

The MDN Browser Compatibility Report found that only 44% of developers were satisfied with the state of cross-browser compatibility. One developer in that survey said it plainly: “Chrome and Firefox are starting to diverge, with Chrome adding features before they’re fully standardized. As the dominant browser, some pages are being written to only work in Chrome now.”

Another one: “Many APIs are Chrome-only and will never show up in other browsers.”

This is not a Firefox problem. This is a developer problem. The browsers themselves are actually converging on standards. The Interop 2024 project ended the year with 95% of web platform tests passing across Chrome, Edge, Firefox, and Safari. Firefox scored the highest at 98.8%. Let me say that again: Firefox has the best standards compliance of any major browser, and websites still break in it because developers simply do not test.

Enter the Vibe Coders

So that’s the baseline. Developers were already building Chrome-only websites before AI entered the picture. Now let’s talk about what happened when you gave millions of people the ability to generate entire web applications without understanding what they’re generating.

The timeline is almost poetic. Anthropic released Claude to the public in July 2023. Claude 3 dropped in March 2024. ChatGPT had already been out since late 2022. By 2025, “vibe coding” had become an actual term. Andrej Karpathy coined it. The idea is simple: you describe what you want, the AI writes the code, you accept it and move on. You don’t really look at it. You just… vibe.

And during this exact same window, Chrome’s market share went up. Firefox’s went down.

Now, correlation isn’t causation. I’m not claiming AI killed Firefox. But I am saying that AI made an existing problem dramatically worse, and here’s why.

As I mentioned above, developers were already bad at cross-browser testing. They at least had the knowledge to do it if they wanted to. Vibe coders don’t even have that. They’re accepting generated code without reviewing it. Researchers have called this the “verification gap,” where building has been democratized but testing has not. A study from December 2025 found 69 vulnerabilities across 15 vibe-coded test applications. AI co-authored code showed 2.74x higher security vulnerabilities and 75% more misconfigurations than human-written code. If these tools can’t even get security right, you think they’re generating proper cross-browser fallbacks?

LLMs are trained on the internet, and the internet is overwhelmingly Chrome. When an AI generates CSS, it reaches for -webkit- prefixed properties because that’s what dominates the training data. When it generates JavaScript, it uses APIs that Chrome supports because those are the ones most represented in the corpus. It’s a feedback loop. Chrome dominates, so the training data skews Chrome, so the AI generates Chrome-first code, so more websites only work in Chrome, so Chrome dominates further.

Even some of the vibe coding platforms themselves are part of the problem. Bolt, one of the popular ones, straight up tells you it “works best on Chrome and other Chromium-based desktop browsers.” The tools used to build the web are now themselves Chrome-only. Let that sink in.

The IE6 Lesson Nobody Learned

In the early 2000s, Internet Explorer had somewhere around 95% market share. Developers built “works best in IE” websites. ActiveX controls everywhere (lol remember that?). Proprietary extensions that only worked in Microsoft’s browser. The web became a monoculture, innovation stalled, and it took years to dig out of that hole. Firefox was literally born to solve that problem.

We are doing the exact same thing again, except this time it’s Google instead of Microsoft, and this time we have AI accelerating the consolidation at a pace that makes the IE era look quaint.

Google reportedly makes up 60-70% of W3C meeting attendees. They are not just building the dominant browser. They are driving the standards process itself. The fox is running the henhouse, and the hens are writing Chrome-only websites with AI tools that don’t know any better.

I Might Have to Switch

I never thought I’d write this. I have used Firefox for a long time. I believe in what it represents. An open, independent web where no single company controls how you experience the internet. I’ve written more than one browser extension for it, I’ve reported bugs, I’ve defended it in conversations more times than I can count.

But I’m tired of being the person who has to keep a second browser around for when things don’t work. I’m tired of hitting a login page and wondering if the button is broken or if it’s just Firefox. I’m tired of doing a double-take every time a website looks weird, trying to figure out if it’s a bug or if the developer just never opened anything except Chrome.

At some point, principle runs into practicality. And right now, using Firefox on the modern web feels like bringing a perfectly good car to a highway that was paved exclusively for trucks.

What’s Actually at Stake

If Firefox dies, and its market share trajectory suggests that’s not a hypothetical, we lose the last truly independent browser engine. Every browser will either be Chromium or WebKit. Google will effectively control how the web renders for everyone on the planet.

A single Chromium vulnerability would affect the vast majority of browser users globally. A single change to how Chromium handles ads, tracking, or content would ripple across billions of screens. One company. One engine. One point of failure.

The U.S. Department of Justice proposed in November 2024 that Google divest Chrome entirely. They valued it at around $20 billion. Whether that happens or not, the fact that it’s even being discussed should tell you something about how consolidated things have gotten.

I don’t have a clean solution here. I can’t make developers test in Firefox. I can’t make AI tools generate cross-browser code. I can’t single-handedly prop up a browser engine’s market share.

But I can say this: if you’re a developer, open Firefox. Load your site. Click around. Fill out a form. Try to pay for something. If it doesn’t work, fix it. It’s that simple.

And if you’re building websites with AI and you’re not testing the output in multiple browsers, you’re not building websites. You’re building Chrome extensions with extra steps.

If your website only works in Chrome, it doesn’t work.